Levenshtein Edit Distance in NLP: Measuring String Similarity in Python

In Natural Language Processing (NLP), assessing and comparing how similar two strings are is a core capability. Whether you are fixing typos, spotting plagiarism, matching names, or judging machine-generated text, being able to quantify how “close” two strings are can noticeably improve the effectiveness of language-driven applications.

One of the most widely recognized and frequently used methods for this purpose is the Levenshtein Edit Distance. This approach calculates the similarity between two strings by measuring the minimum number of changes required to transform one into the other. Often referred to simply as edit distance, it is commonly applied in NLP systems, spell checkers, search engines, and fuzzy matching processes. In this article, we’ll explore how to implement Levenshtein Distance in Python, compare available libraries, evaluate performance considerations, and examine its role in modern NLP and LLM-driven workflows.

Key Takeaways

- Levenshtein Distance is a core string-similarity metric that computes the minimum number of single-character edits (insertions, deletions, substitutions) needed to convert one string into another.

- It is commonly applied in spell checking, fuzzy search, autocorrect, plagiarism detection, and bioinformatics.

- The approach relies on dynamic programming, usually via a matrix, producing deterministic and exact outcomes.

- Python packages such as Levenshtein, fuzzywuzzy, and distance make practical implementations easier for real-world use.

- Despite its strengths, Levenshtein Distance can be computationally heavy and does not reflect semantic meaning, which makes it less suitable for deeper NLP tasks involving full-text semantics.

- Other measures—such as Jaro-Winkler, Damerau-Levenshtein, Jaccard, and Cosine Similarity—provide different advantages depending on the scenario (for example: names, token overlap, semantic similarity).

How Levenshtein Distance Works

Levenshtein Distance counts the smallest number of single-character edits (insertions, deletions, or substitutions) required to transform one string into another. Below is a straightforward but complete walk-through using a matrix-based example that is easy to follow:

“book” → “back”

Let:

- String 1, s1 = “book” (length = 4)

- String 2, s2 = “back” (length = 4)

Step 1: Matrix initialization

To map the strings into a matrix, we create a 5 X 5 grid so we can also represent the empty string (“ ”) at index 0.

Empty Matrix Initialization:

|

|

b |

a |

c |

k |

|

0 |

1 |

2 |

3 |

4 |

| b |

1 |

|

|

|

|

| o |

2 |

|

|

|

|

| o |

3 |

|

|

|

|

| k |

4 |

|

|

|

|

Step 2: Fill out the matrix

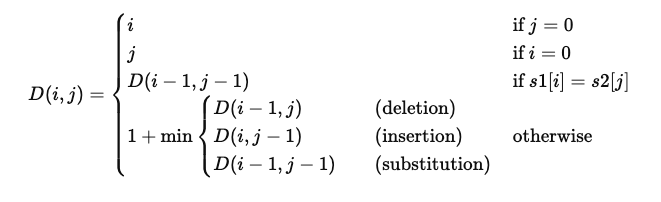

The Levenshtein distance between two strings i, j (of length | i | and | j | respectively) is expressed as D ( i, j ) where:

Let’s work through a few important cells:

Cell (1,1): Compare ‘b’ and ‘b’ → Match

D(1,1)=D(0,0)=0

Cell (2,2): Compare ‘o’ and ‘a’ → No match

D(1,2) = 1 (deletion)

D(2,1) = 2 (insertion)

D(1,1) = 0 (substitution)

D(2,2)=1+min(1,2,0)=1

Cell (3,3): Compare ‘o’ and ‘c’ → No match

D(2,3) = 2

D(3,2) = 2

D(2,2) = 1

D(3,3)=1+min(2,2,1)=2 Continue this process…

Step 3: Final Matrix

|

|

b |

a |

c |

k |

|

0 |

1 |

2 |

3 |

4 |

| b |

1 |

0 |

1 |

2 |

3 |

| o |

2 |

1 |

1 |

2 |

3 |

| o |

3 |

2 |

2 |

2 |

3 |

| k |

4 |

3 |

3 |

3 |

2 |

Step 4: Final Result

Levenshtein Distance D(4,4) = 2 So, it takes 2 operations to turn “book” → “back”:

- Replace ‘o’ → ‘a’

- Replace ‘o’ → ‘c’

In the example above, we computed the edit distance while building the matrix step by step. Levenshtein Distance is obtained by evaluating insertions, deletions, and substitutions at each stage, with the final value found in the matrix’s bottom-right cell.

Implementing Levenshtein Distance in Python – Without Libraries

Below is Python code that calculates and prints the Levenshtein distance along with the matrix.

def levenshtein_distance(s1, s2):

m, n = len(s1), len(s2)

dp = [[0 for _ in range(n+1)] for _ in range(m+1)]

# Initialize base cases

for i in range(m+1):

dp[i][0] = i

for j in range(n+1):

dp[0][j] = j

# Fill the matrix

for i in range(1, m+1):

for j in range(1, n+1):

if s1[i-1] == s2[j-1]:

cost = 0

else:

cost = 1

dp[i][j] = min(

dp[i-1][j] + 1, # deletion

dp[i][j-1] + 1, # insertion

dp[i-1][j-1] + cost # substitution

)

# Print the matrix

print("Levenshtein Distance Matrix:")

print(" ", " ".join([" "] + list(s2)))

for i in range(m+1):

row = [s1[i-1] if i > 0 else " "]

for j in range(n+1):

row.append(str(dp[i][j]))

print(" ".join(row))

return dp[m][n]

# Example: "book" to "back"

s1 = "book"

s2 = "back"

distance = levenshtein_distance(s1, s2)

print(f"\nLevenshtein distance between '{s1}' and '{s2}' is {distance}")

**Levenshtein Distance Matrix:**

**b a c k**

**0 1 2 3 4**

**b 1 0 1 2 3**

**o 2 1 1 2 3**

**o 3 2 2 2 3**

**k 4 3 3 3 2**

Levenshtein distance between ‘book’ and ‘back’ is 2

Make use of Python libraries for Levenshtein distance

1. Using python-Levenshtein

!pip install python-Levenshtein

import Levenshtein

s1 = "book"

s2 = "back"

distance = Levenshtein.distance(s1, s2)

print(f"Levenshtein distance: {distance}")

2. Using fuzzywuzzy (for fuzzy matching)

!pip install fuzzywuzzy python-Levenshtein

from fuzzywuzzy import fuzz

s1 = "book"

s2 = "back"

# Fuzzy match ratio based on Levenshtein distance

ratio = fuzz.ratio(s1, s2)

print(f"Fuzzy match ratio: {ratio}%")

Note: fuzz.ratio() provides a similarity percentage, not the distance value itself.

3. Using rapidfuzz (lightweight and fast)

!pip install rapidfuzz

from rapidfuzz.distance import Levenshtein

s1 = "book"

s2 = "back"

distance = Levenshtein.distance(s1, s2)

print(f"Levenshtein distance: {distance}")

4. Using distance (simple and pure Python)

!pip install distance

import distance

s1 = "book"

s2 = "back"

distance_val = distance.levenshtein(s1, s2)

print(f"Levenshtein distance: {distance_val}")

5. Using textdistance (flexible and supports many algorithms)

!pip install textdistance

import textdistance

s1 = "book"

s2 = "back"

distance = textdistance.levenshtein(s1, s2)

print(f"Levenshtein distance: {distance}")

Complete Python Performance Benchmark

The benchmarking script below evaluates Levenshtein Distance speed across these libraries: rapidfuzz, python-Levenshtein, textdistance, and distance.

First, install the required dependencies if they are not already present:

pip install matplotlib rapidfuzz python-Levenshtein textdistance distance

import time

import matplotlib.pyplot as plt

import Levenshtein

import textdistance

import distance as dist

from rapidfuzz.distance import Levenshtein as r_lev

# Strings to compare

s1 = "book"

s2 = "back"

N = 100_000

# Store results

results = {}

# Benchmark function

def benchmark(name, func):

start = time.time()

for _ in range(N):

func(s1, s2)

end = time.time()

duration = end - start

avg_time_us = duration / N * 1e6

results[name] = (duration, avg_time_us)

print(f"{name:<25}: {duration:.4f} sec (Avg: {avg_time_us:.2f} µs)")

print("Benchmarking Levenshtein Distance (100,000 repetitions):\n")

# Run benchmarks

benchmark("rapidfuzz", lambda a, b: r_lev.distance(a, b))

benchmark("python-Levenshtein", lambda a, b: Levenshtein.distance(a, b))

benchmark("textdistance", lambda a, b: textdistance.levenshtein(a, b))

benchmark("distance (pure Python)", lambda a, b: dist.levenshtein(a, b))

# Plotting the results

libraries = list(results.keys())

total_times = [results[lib][0] for lib in libraries]

avg_times = [results[lib][1] for lib in libraries]

plt.figure(figsize=(10, 5))

bars = plt.bar(libraries, avg_times, color=['#66c2a5', '#fc8d62', '#8da0cb', '#e78ac3'])

plt.title("Levenshtein Distance Avg Time per Call (µs)")

plt.ylabel("Average Time (µs)")

plt.xlabel("Python Library")

plt.grid(axis='y', linestyle='--', alpha=0.7)

plt.tight_layout()

# Annotate bars with actual times

for bar, avg in zip(bars, avg_times):

yval = bar.get_height()

plt.text(bar.get_x() + bar.get_width() / 2, yval + 0.5, f"{avg:.2f}", ha='center', va='bottom', fontsize=9)

plt.show()

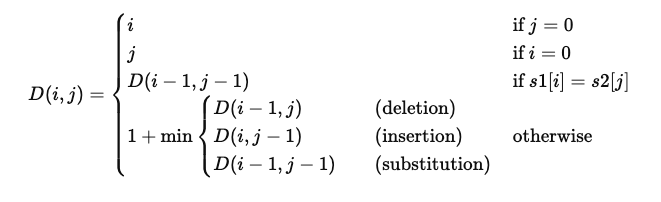

Benchmarking Levenshtein Distance (100,000 repetitions):

rapidfuzz : 0.0866 sec (Avg: 0.87 µs)

python-Levenshtein : 0.0881 sec (Avg: 0.88 µs)

textdistance : 0.3400 sec (Avg: 3.40 µs)

distance (pure Python) : 1.4420 sec (Avg: 14.42 µs)

image

Comparison with Other String Similarity Metrics

String similarity matrices are a technique where strings are represented in a structured grid to measure how similar or different the terms are. This is achieved by capturing character-level or token-level variation in a table. Such matrices are essential to algorithms like Levenshtein, Damerau-Levenshtein, and related approaches, where each matrix cell reflects the “cost” or “distance” of converting one substring into another through operations like insertion, deletion, substitution, or transposition.

Typically, the matrix starts with increasing values in the first row and first column, describing the cost of converting an empty string into the characters of the other string.

As the grid is filled row by row and column by column, each cell is computed using a recurrence rule that checks the current characters and selects the minimum cost based on earlier cells. This forms a dynamic programming matrix in which the bottom-right cell contains the final distance or similarity outcome for the full strings. These similarity matrices underpin advanced NLP use cases such as spell checking, fuzzy matching, and dataset deduplication.

Different measures focus on different goals, and may prioritize edit distance, token overlap, or phonetic similarity.

| Metric |

Type |

What it Measures |

Range |

Notes |

| Levenshtein Distance |

Edit Distance |

Minimum insertions, deletions, substitutions |

0 to ∞ |

Applies the same penalty to each character-level change |

| Damerau-Levenshtein |

Edit Distance |

Like Levenshtein but also considers transpositions |

0 to ∞ |

More practical for typos (e.g. “acress” → “caress”) |

| Hamming Distance |

Edit Distance |

Number of different positions (only for equal length) |

0 to n |

Fast, but not as adaptable |

| Jaro / Jaro-Winkler |

Similarity |

Measures character agreement and transpositions |

0.0–1.0 |

Works well for short strings and names |

| Jaccard Similarity |

Set-Based |

Intersection over union of sets/tokens |

0.0–1.0 |

Useful for longer strings or token-focused comparison |

| Cosine Similarity |

Vector-Based |

Angle between TF-IDF or word vector representations |

-1.0–1.0 |

Used in NLP workflows; requires vectorization |

| Sorensen-Dice |

Set-Based |

2 * A ∩ B |

This metric assesses the similarity between a computer-segmented image and its corresponding ground truth (the correct answer). |

|

| Soundex / Metaphone |

Phonetic |

Encodes strings by pronunciation |

Match/No match |

Best for names or pronunciation-based matching (e.g., in genealogy) |

Let us revisit the “book” and “back” example:

| Metric |

Value |

Interpretation |

| Levenshtein |

2 |

2 character edits needed |

| Damerau-Levenshtein |

2 |

Same as Levenshtein (no transposition here) |

| Hamming |

2 |

Only valid because lengths are equal |

| Jaro-Winkler |

~0.83 |

Fairly similar short strings |

| Jaccard (character set) |

0.5 |

{‘b’,‘o’,‘k’} ∩ {‘b’,‘a’,‘c’,‘k’} |

| Cosine (TF-IDF) |

Depends on corpus |

Needs vectorization |

Levenshtein distance is a strong general-purpose method for character-level string comparison, but more specialized measures can perform better in specific situations. Jaro-Winkler and Damerau-Levenshtein are especially helpful for name matching and typographical issues, since they may assign higher similarity to shared prefixes or swapped characters.

By contrast, set-based approaches like Jaccard and the Dice coefficient are more appropriate when comparing at the word or token level, which is useful for document or phrase similarity work.

To capture meaning beyond visible character changes, vector-based measures such as cosine similarity—applied to TF-IDF vectors or embeddings—enable semantic comparison in full-text or NLP scenarios. Each metric provides a distinct viewpoint on similarity, and the best choice depends on the desired granularity and the comparison context.

Applications in NLP and LLMs

Levenshtein edit distance is often treated as a foundational tool in natural language processing tasks. Because it measures how different two strings are by computing the minimum required insertions, deletions, or substitutions, it is well suited to noisy, user-generated inputs. In modern NLP pipelines and large language models (LLMs), Levenshtein distance frequently serves as a supporting component—either in preprocessing (such as cleaning, spelling fixes) or in evaluation (such as comparing generated outputs to reference answers). Below are several major applications of Levenshtein edit distance:

- Spell Checking and Auto-correction Identify and fix typos by selecting the closest dictionary term based on minimum edit distance.

- Fuzzy String Matching Match misspelled or approximately similar strings in search, autocomplete, and OCR use cases.

- Text Normalization Clean and standardize noisy or user-generated text before sending it into NLP pipelines.

- Named Entity Matching Improve entity resolution for deduplication and linking names that differ slightly.

- Evaluation of Text Generation Compare model outputs with ground truth for tasks such as summarization or translation.

- Intent Recognition in Chatbots Map user queries to predefined intents despite spelling mistakes or variations.

- Data Deduplication Find near-duplicate entries in datasets, especially where database records are inconsistent.

Pros of Levenshtein Distance

Levenshtein edit distance is straightforward to grasp and relatively simple to compute, as covered in this article. It works by counting the minimum number of single-character edits needed to convert one string into another. This clarity makes it a preferred option for many basic string-comparison tasks. Additionally, the method is language-agnostic because it operates purely on characters. It does not depend on language-specific rules, which makes it usable across many languages and character sets. The resulting distance is exact and deterministic, providing a clearly defined and repeatable measure of how strings differ.

Cons of Levenshtein Distance

Computing Levenshtein Distance can be resource-intensive because its time complexity is O(m × n) (where m and n are the lengths of the two input strings), which can be slow for long strings or very large comparison sets.

Levenshtein Distance does not account for context or meaning, since it only measures character-level changes. For instance, “cat” and “feline” produce a high edit distance even though they are semantically similar.

Standard Levenshtein distance does not handle character transpositions effectively. For example, “form” and “from” look and read similarly but yield a distance of 2. Damerau-Levenshtein addresses this more effectively.

Levenshtein distance assigns the same weight to insertions, deletions, and substitutions. In practical systems, certain edits (such as swapping visually similar characters) may be more probable or should be treated as lower-cost than others.

Because it focuses on individual characters, it is less suitable for tasks that require token- or phrase-level similarity, such as semantic matching or text classification.

FAQs

How to calculate Levenshtein distance in Python? You can use python-Levenshtein, rapidfuzz, or build a custom dynamic programming solution.

How do you calculate Levenshtein Distance in Python without a library? Use nested loops with a dynamic programming matrix, as demonstrated in the code example above.

What is the time complexity of Levenshtein Distance? O(m*n), where m and n are the lengths of the two strings.

What’s the difference between Levenshtein Distance and Hamming Distance? Hamming applies only to equal-length strings and counts mismatched positions; Levenshtein also supports insertions and deletions.

Which Python library is fastest for Levenshtein Distance? rapidfuzz is typically the quickest and most efficient choice for production scenarios.

Is Levenshtein Distance used in machine learning or LLMs? Yes. It is applied in preprocessing, deduplication, and postprocessing, and it also supports semantic matching methods within LLM pipelines.

Conclusion

Levenshtein Distance continues to be a foundational algorithm for string similarity in Python. While modern semantic models have broadened what NLP can achieve, Levenshtein still remains important for tasks that demand literal, character-level comparison. With strong libraries such as rapidfuzz and combined methods that pair edit distance with embeddings, Python developers have more options than ever for solving fuzzy string matching challenges.